A complete walkthrough of every calculation behind the tool — from raw NVMe capacity to ESA protection factors, NVMe memory tiering, and VCF licence entitlement. No black boxes.

Table of Contents

- What the tool sizes

- Host specification inputs

- Management VM stack

- Compute sizing formula

- vSAN ESA storage pipeline

- Protection policies & PF table

- Final host count & limiter

- NVMe memory tiering

- External storage mode

- VCF licence entitlement

- Principal storage options (KB 416270)

- Assumptions & caveats

1. What the tool sizes

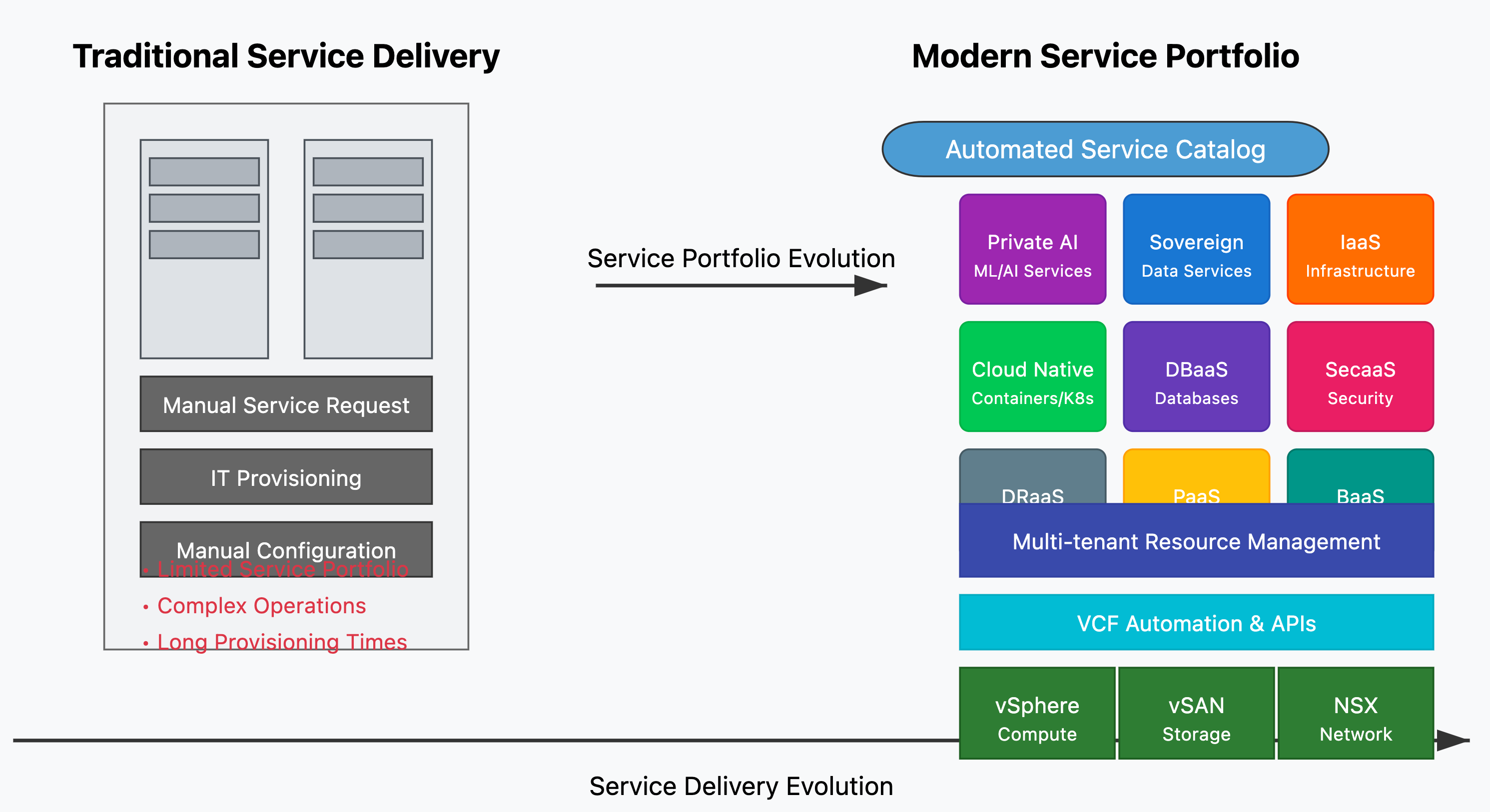

The VCF 9 Fleet Sizer calculates the minimum number of ESXi hosts required across a VMware Cloud Foundation deployment — one Management Domain and any number of VI Workload Domains. For each domain it independently determines whether CPU, memory, or storage is the binding constraint, and returns the host count driven by the most demanding dimension.

The sizer is built specifically for VCF 9 with vSAN ESA — the Express Storage Architecture that requires NVMe-only drives and operates as a single storage tier without a separate cache/capacity split. It also models external storage mode (Fibre Channel, NFS) where hosts are sized on compute and memory only, and a disaggregated NVMe memory tiering model unique to VCF 9.

⚠️ Planning aid only — not an official Broadcom tool. All outputs are estimates based on the inputs you provide. Validate every design against official Broadcom documentation, the VMware HCL, and field engineering guidance before procurement or deployment. Real-world DRR and vSAN overheads vary significantly by workload.

2. Host specification inputs

Every domain (management and each WLD) has an independent host specification. The tool does not assume all hosts are identical across domains — a management cluster might run 2×16c hosts while a production WLD uses 2×32c AI-optimised nodes.

| Input | Default | Used in | Notes |

|---|---|---|---|

| CPU Qty | 2 | Core count, licensing | Sockets per host |

| Cores per CPU | 16 | Core count, licensing | Physical cores — no hyperthreading multiplier applied |

| RAM (GB) | 1,024 | Memory sizing | Total usable host RAM |

| NVMe Qty | 6 | Storage sizing | NVMe drives per host (vSAN ESA only) |

| NVMe Size (TB) | 7.68 | Storage sizing | TB decimal — converted to GB via ×1,000 |

| CPU Oversubscription | 2× | Usable vCPU | vCPU:pCPU ratio — applies before reserve |

| RAM Oversubscription | 1× | Usable RAM | 1× = no oversubscription. Rarely exceed 1× for RAM |

| Compute Reserve % | 30% | Usable vCPU & RAM | Headroom withheld from placement (HA, overhead) |

Raw capacity per host formulas:

Host Cores = CPU Qty × Cores per CPURaw GB per Host = NVMe Qty × NVMe Size (TB) × 1,000⚠️ No hyperthreading multiplier. The sizer deliberately does not multiply physical cores by 2 for hyperthreading. Logical thread counts are workload-specific and highly variable. Instead, the CPU oversubscription ratio gives you explicit control. A 2× ratio on a 32-core host models the same headroom as a 64-thread count at 1× — but you’re aware you’re making that choice.

3. Management VM stack

The Management Domain hosts a fixed stack of VCF infrastructure VMs. These are not user workloads — they are the control plane. Their combined vCPU, RAM, and disk demand is the entire sizing input for the management cluster. The tool carries an accurate per-component VM stack based on current VCF 9 T-shirt sizes from Broadcom documentation.

| Component | Sizes | vCPU range | RAM range | Disk range |

|---|---|---|---|---|

| vCenter Server (Mgmt) | S / M / L / XL | 4 – 24 | 21 – 58 GB | 694 – 2,283 GB |

| NSX Manager | M / L / XL | 6 – 24 | 24 – 96 GB | 300 – 400 GB |

| NSX Edge | S / M / L / XL | 2 – 16 | 4 – 64 GB | 200 GB |

| NSX Global Manager | S / M / L / XL | 4 – 24 | 16 – 96 GB | 300 – 400 GB |

| Avi Load Balancer | S / M / L | 8 – 24 | 24 – 48 GB | 128 – 512 GB |

| vCenter Server (WLD) | S / M / L / XL | 4 – 24 | 21 – 58 GB | 694 – 2,283 GB |

| VCF Operations (SDDC Mgr) | S / M / L / XL | 4 – 24 | 16 – 128 GB | 274 GB |

| VCF Operations Collector | S / M | 2 – 4 | 8 – 32 GB | 144 GB |

| VCF Operations for Logs | S / M / L | 12 – 48 | 24 – 96 GB | 1,590 GB |

| VCF Operations for Networks | L / XL / XXL | 12 – 48 | 24 – 96 GB | 1,590 GB |

| VCF Net. Collector | M / L / XL / XXL | 4 – 16 | 12 – 48 GB | 200 – 300 GB |

| Identity Manager | Embedded / HA | 0 – 32 | 0 – 64 GB | 0 – 400 GB |

Management sizing is deterministic: configure your component sizes, and the tool sums the total vCPU, RAM, and disk demand — no workload VM estimates needed.

4. Compute sizing formula

For Workload Domains, tenant demand is specified as VM count × per-VM averages for vCPU, RAM, and disk. Infrastructure VMs (NSX Edges, VKS Supervisor nodes) can optionally be included in the WLD demand totals. All demands are then sized against the host specification to determine the compute host floor.

WLD demand totals:

Demand vCPU = (VMs × vCPU/VM) + Infra vCPUDemand RAM = (VMs × RAM/VM) + Infra RAMDemand Disk = (VMs × Disk/VM) + Infra DiskUsable capacity per host:

Usable vCPU/host = Host Cores × CPU Oversub × (1 − Reserve%)Usable RAM/host = Host RAM × RAM Oversub × (1 − Reserve%)Compute host floors (evaluated independently):

CPU Hosts = ⌈ Demand vCPU / Usable vCPU per host ⌉RAM Hosts = ⌈ Demand RAM / Usable RAM per host ⌉Example: 200 VMs × 4 vCPU = 800 vCPU demand. Host: 2×16c = 32 physical cores × 2× oversub × 0.70 reserve factor = 44.8 usable vCPU/host. CPU Hosts = ⌈ 800 / 44.8 ⌉ = 18 hosts.

5. vSAN ESA storage pipeline

vSAN ESA storage sizing is a sequential pipeline of capacity transformations. Each stage adds overhead for a specific reason. Starting from raw VM disk demand, the pipeline applies data reduction, swap space, protection overhead, free space reserve, and growth buffer — in that order — to arrive at the total raw capacity required and therefore the storage host floor.

Pipeline stages:

Step 1 — VM Capacity GB = Demand Disk GB ÷ DRR (DRR = Dedup Ratio × Compression Ratio)Step 2 — Swap GB = Demand RAM GB × VM Swap% (100% for mgmt, configurable for WLD)Step 3 — Interim GB = VM Capacity GB + Swap GBStep 4 — Protected GB = Interim GB × Protection Factor (PF)Step 5 — With Free GB = Protected GB × (1 + vSAN Free%)Step 6 — Total Required = With Free GB × (1 + Growth%)Storage host floor:

Effective Hosts = Total Hosts − Failures to ToleratePer-Host Requirement = Total Required GB ÷ Effective HostsStorage Hosts = ⌈ Total Required GB / Raw GB per Host ⌉ + FailuresData Reduction Ratio (DRR)

The tool splits DRR into two separate inputs: Dedup Ratio and Compression Ratio. DRR = Dedup × Compression. Both default to 1.0 (no reduction) because real-world ratios depend entirely on data entropy — databases compress poorly, VDI golden images deduplicate extremely well. Using optimistic DRR values leads to undersized storage clusters.

⚠️ DRR above 2.0 is optimistic. Unless you have measured DRR from an equivalent workload in your environment, keep both ratios at 1.0. A DRR of 2.0 halves your storage host count. If the real-world ratio comes in at 1.2, you’ll need significantly more hosts than planned.

TiB conversion

The tool uses binary TiB throughout. NVMe drives are marketed in TB decimal (1 TB = 1,000 GB). Conversion: 1 TB = 1,000 GB = 0.9095 TiB. A 6× 7.68 TB host = approximately 41.9 TiB raw per host after conversion.

6. Protection policies & PF table

The Protection Factor (PF) is the storage overhead multiplier applied to usable data to account for redundancy. It is determined by your chosen RAID type, FTT (Failures to Tolerate), and for RAID-5, the stripe width. The tool enforces the minimum host count per policy.

| Policy | PF | Min Hosts | FTT | Notes |

|---|---|---|---|---|

| RAID-5 2+1 FTT=1 | 1.50x | 3 | 1 | Default — best balance of protection and efficiency |

| RAID-5 4+1 FTT=1 | 1.25x | 6 | 1 | Lower overhead but needs 6+ hosts |

| RAID-6 4+2 FTT=2 | 1.5x | 6 | 2 | Two simultaneous drive failures tolerated |

| Mirror FTT=1 | 2.x | 3 | 1 | Simple mirror — highest rebuild performance |

| Mirror FTT=2 | 3.× | 5 | 2 | Three copies of every object |

| Mirror FTT=3 | 4.× | 7 | 3 | Maximum redundancy — very high storage cost |

7. Final host count & limiter

The final host count is the maximum across four independent floors: CPU hosts, RAM hosts, storage hosts, and the policy minimum. The tool identifies which floor is binding and labels it the Limiter.

Final Hosts = max( CPU Hosts, RAM Hosts, Storage Hosts, Policy Min )| Limiter | Meaning | Common cause |

|---|---|---|

| Compute | CPU is the binding constraint | High vCPU density, low oversub ratio |

| Memory | RAM is the binding constraint | Memory-intensive workloads, RAM oversub at 1× |

| Storage | vSAN ESA capacity drives the count | Large disk demand, high PF, low DRR, insufficient NVMe |

| Policy | Protection policy min host count | Small cluster — compute fine but policy enforces minimum N hosts |

When storage is the limiter, your NVMe capacity per host is insufficient to hold the protected dataset within the compute-determined host count. Solutions: increase NVMe drive count or size, relax the vSAN free% reserve, or accept a higher host count.

8. NVMe memory tiering (VCF 9)

VCF 9 introduces NVMe-backed memory tiering, where fast NVMe drives act as a memory extension. A partition of each NVMe drive is set aside as a memory tier — not storage — allowing effective RAM per host to exceed physical DRAM installed. This can reduce the host count when memory is the sizing constraint.

Tiering formulas:

Partition GB = min( Drive GB, DRAM × NVMe Ratio, 512 GB cap )NVMe Ratio Used = Partition GB ÷ Host DRAM GBEffective Host RAM = Host DRAM × (1 + NVMe Ratio Used)Tiered Demand R = ( Eligible Demand ÷ (1 + NVMe Ratio Used) ) + Ineligible DemandKey inputs: Eligibility % (what fraction of workload is not latency-sensitive), NVMe-to-DRAM ratio (GB of NVMe tier per GB of DRAM), and tier drive size (separate from vSAN data drives). The effective RAM and reduced demand figure feed back into the RAM host floor calculation.

⚠️ Tiering caveats. NVMe tiering suits read-heavy workloads with temporal locality. It is not appropriate for latency-sensitive databases, real-time analytics, or anything where memory bandwidth consistency matters. The eligibility % input requires honest assessment of your workload mix.

9. External storage mode

Both the Management Domain and each WLD can be toggled to External Array mode — modelling Fibre Channel or NFS as principal storage. In this mode, the vSAN ESA storage pipeline is bypassed entirely. Host count is determined by compute only, and the user supplies an estimated array capacity for documentation.

Final Hosts (ext) = max( CPU Hosts, RAM Hosts, Policy Min ) — Storage floor is removedThe Limiter can only be Compute, Memory, or Policy. No ESA capacity, PF, or per-host storage figures are calculated for external domains.

Entitlement impact

Every VCF core licence includes 1 TiB of vSAN raw storage entitlement. When a domain runs external storage, those cores are still licensed at the same cost but the bundled vSAN storage is unused.

Forfeited TiB = Licensed Cores × 1 TiB/coreFor a 10-host domain with 2×32c hosts, that’s 640 TiB of vSAN entitlement forfeited — storage the customer is paying for but not using. The tool surfaces this inline, in the Fleet License Summary, and in the export report so the commercial impact is visible before procurement conversations begin.

10. VCF licence entitlement calculation

VCF 9 is licensed per core. The tool calculates total core count across the fleet and derives the vSAN storage entitlement bundled with those licences.

Mgmt Cores = Mgmt Hosts × Host CoresWLD Cores = Σ( WLD Hosts × Host Cores )Entitlement (TiB) = ( Mgmt Cores + WLD Cores ) × 1 TiB/coreFleet vSAN Raw TiB = Σ( Hosts × NVMe Qty × NVMe TB × 0.9095 )Add-on Required = max( 0, Fleet Raw TiB − Entitlement TiB )If raw capacity exceeds entitlement, the difference is flagged as Add-on TiB Required — additional vSAN capacity licensing needed beyond what’s included in core licences. External storage domains exclude their array capacity from the fleet raw total.

11. Principal storage options in VCF 9 (KB 416270)

VCF 9 supports a broader set of principal storage options than previous versions. Some are available via standard greenfield workflows; others require the Converge workflow. This distinction matters — it affects automation, LCM, and Day 2 operations.

| Storage Model | Mgmt Default | Mgmt Additional | VI WLD | Method |

|---|---|---|---|---|

| vSAN ESA | Principal | Principal | Principal | 🟢 Greenfield |

| vSAN OSA | Principal | Principal | Principal | 🟢 Greenfield |

| Storage Cluster (disagg. vSAN) | — | Principal | Principal | 🟢 Greenfield |

| Compute-Only Cluster | — | Principal | Principal | 🟢 Greenfield |

| Fibre Channel (FC) | Principal | Principal + Supp | Principal + Supp | 🟢 Greenfield |

| NFS v3 | Principal | Principal + Supp | Principal + Supp | 🟢 Greenfield |

| iSCSI | Principal* | Principal* | Principal* | 🔄 Converge |

| NFS v4.1 | Principal* | Principal* | Principal* | 🔄 Converge |

| FCoE | Principal* | Principal* | Principal* | 🔄 Converge |

| NVMe/FC · NVMe/TCP · NVMe/RDMA | Principal* | Principal* | Principal* | 🔄 Converge |

* Via Converge workflow: deploy ESXi 9 → configure target datastore → deploy vCenter 9 → import into VCF 9 using Converge (management) or Import vCenter (WLD).

⚠️ Day 2 operations constraint: For non-LCM Day 2 operations (host commissioning, adding/removing hosts or clusters), perform the operation in vCenter first, then run Sync Inventory in VCF Operations. If this step is skipped, lifecycle management in VCF Operations will be blocked for those hosts and clusters.

Source: Broadcom KB Article 416270

12. Assumptions & caveats

| Assumption | Detail |

|---|---|

| Single cluster per domain | Each WLD is modelled as one cluster. Multi-cluster WLDs are not supported. |

| Homogeneous hosts | All hosts within a domain use the same spec. Mixed-node clusters are not modelled. |

| vSAN ESA only | The storage pipeline models ESA only. vSAN OSA has different overhead characteristics. |

| Growth is a flat buffer | Growth % is applied once, not compounded year-over-year. Add headroom manually for multi-year plans. |

| VM Swap fixed at 100% for mgmt | The management domain’s swap requirement is not user-configurable. |

| No stretched cluster modelling | Stretched clusters double host count and require witness nodes — not currently modelled. |

| Flat DRR across all data | A single DRR applies to the entire disk demand. Mixed workloads with varying compressibility are not modelled per-VM. |

| No explicit vSAN CPU/RAM overhead | vSAN ESA consumes a small amount of host CPU and memory. Include this in your Compute Reserve % input. |

🚫 Not an official Broadcom tool. This sizer is an independent planning aid built by vmtechie.blog. It is not endorsed by or affiliated with Broadcom. All figures are estimates. Validate every design against official Broadcom TechDocs, VMware HCL, and field engineering guidance before procurement or deployment.